Google Data Center in New Albany, Ohio

David Isenberg brings us Stanford’s free online class on “AI Filmmaking,” “Data Centers in Space,” “A major AI-enabled cyberattack is coming,” “Learn to coach your chatbot,“ “Pete Hegseth and the AI Doomsday Machine,” and much more.

News from The AI Report

Samsung unveils “most advanced” Galaxy AI yet

February 26, 2026

Samsung has announced the Galaxy S26 series, its third-generation AI phones, featuring what it calls the “most proactive and adaptive” Galaxy AI to date. The flagship S26 Ultra introduces the world’s first built-in Privacy Display for mobile phones, alongside a customized Snapdragon 8 Elite Gen 5 processor with a 39% boost in NPU performance to power always-on AI features.

Key Points

The S26 series introduces Now Nudge, an AI feature that surfaces contextual suggestions, such as automatically recommending photos from a recent trip when a friend asks for them, reducing the need to search or switch apps.

__________________________

News from The Atlantic

Anthropic Takes a Stand

The company is refusing to bow to the Pentagon’s demands.

February 26, 2026

Hegseth wasn’t satisfied. There were certain things Claude just wouldn’t do….That’s because… The Pentagon’s version of Claude could not be used to facilitate the mass surveillance of Americans, nor could it be used in fully autonomous weaponry—situations where computers, rather than humans, make the final decision about whom to kill…. Hegseth made clear that if Anthropic [the company that owns Claude] did not eliminate those two guardrails by Friday afternoon, two things could happen: The Department of Defense could use the Defense Production Act,… to essentially commandeer a more permissive iteration of the AI, or it could label Anthropic a “supply-chain risk,” meaning that anyone doing business with the U.S. military would be forbidden from associating with the company….

This evening, Anthropic said in a public statement that it “cannot in good conscience accede” to the Pentagon’s request.

__________________________

News from The AI Report

We Taught AI Filmmaking at Stanford 🎬

The full masterclass, now live.

February 26, 2026

In this special masterclass, Upscaile partnered with Stanford to teach a 90-minute deep dive on AI prompting and filmmaking: going from fundamentals to a fully produced AI short film in just 48 hours.

Inside the class:

- Why AI is a probability engine (not a thinking machine)

- The 3 pillars of powerful prompting

- Character Lock, Style Lock & visual consistency workflows

- The full AI short film reveal

▶️ Watch the full video on YouTube

🎧 Listen to the episode on Spotify

__________________________

News from Axios

Congress rips Pentagon over “sophomoric” Anthropic fight

February 26, 2026

Democratic senators — and at least one Republican — are urging the Pentagon to withdraw its ultimatum to Anthropic, insisting Congress must participate in the debate over military use of artificial intelligence.

Why it matters: The unprecedented showdown between the Department of Defense and Anthropic has largely been a two-party contest. Now Congress wants to enter the fray….

- “It’s fair to say that Congress needs to weigh in if they have a tool that could actually result in mass surveillance,” Tillis, a member of the Armed Services Committee, said.

- “The deadline is incredibly tight,” Sen. Gary Peters told Axios. “That should not be the case if you’re dealing with mass surveillance of civilians. You’re also dealing with the potential use of lethal force without a human in the loop.”

__________________________

News from 1440

Quartz Washington

February 26, 2026

OpenAI and Anthropic are raising enormous sums of campaign cash… Super PACs with ties to AI firms began sprouting last year…. What’s emerging lately is the AI industry’s first true Washington spending war: rival labs, their investors, and aligned advocacy groups are beginning to treat AI regulation not just as a fight over policy but as an electoral battleground — one where campaign dollars could shape which party controls Congress and how aggressively lawmakers move to police the technology.

On Monday, Anthropic’s latest political move was putting its seal of approval on a moderate Democrat known for pro-business ties: Rep. Josh Gottheimer of New Jersey, a co-chair of the House Democratic Commission on AI.

__________________________

News from Axios

How to protect against a major AI-enabled cyberattack.

Former officials and leading cyber experts agree on one thing: A major AI-enabled cyberattack is coming.

Why it matters: No matter what form it takes — from a highly targeted attack on utilities to cybercriminals losing control of AI agents — companies and government agencies need to rethink their defenses now, experts told me.

I spoke with several leading cyber experts and former senior government officials in recent weeks to understand what worries them most about AI threats.

They had their fears — and also solutions.

__________________________

News from Curmudgucation

Google’s AI Push For Schools

February 26, 2026

Google has scored another chance to get its products into schools in the form of a “sizable investment” in AI training. As Greg Troppo reports at The74, training will be offered through ISTE+ASCD ….

The justification will seem familiar. Per Troppo:

“We have just heard so much feedback from teachers that are just saying, ‘We are not prepared,’” said Richard Culatta, ISTE+ASCD’s CEO. “‘We don’t have the training, we don’t have the background that we need for the realities of teaching in an AI world, both teaching in the classroom and also, secondarily, but equally as important, preparing students for the world that they’re going to be in.’”

__________________________

News from Axios

The race to catch Claude

February 26, 2026

Supremacy can be fleeting in the highly competitive AI race. But two months into 2026, Anthropic’s Claude is upending U.S. national security, roiling financial markets…. The company is in the middle of the most important fight of the era: how much power to give AI in the face of threats real and virtual.

Anthropic said this week it would soften the central commitment of its flagship safety framework — acknowledging that unilateral safety pledges won’t survive a world where rivals have no such constraints.

For a company that has long positioned itself as the AI industry’s conscience, it was a remarkable reversal — on the same day the Pentagon threatened to effectively kick Claude out of government in a fight over its appropriate military uses.

__________________________

News from Curmudgucation

Pew: More Than Half Of U.S. Teens Use Chatbots To Help With Homework

February 26, 2026

New research from Pew Research Center paints a detailed picture of how U.S. teens are using chatbots, and how they view the effects of such use.

Almost six in ten (57%) use chatbots to search for information. 54% say they use chatbots to “help with homework,” and 47% say they use the chatbots for “fun or entertainment.” Educators I spoke to said the numbers seem very low to them. Certainly, AI companies seem to see “helping” students as a growth market. Take the Einstein app….

__________________________

News from Lawfare

Fighting AI Cyberattacks Starts With Knowing They’re Happening

February 26, 2026

As AI accelerates cyber operations, the United States must build new mechanisms to detect, investigate, and learn from attacks driven by emerging capabilities.

Anthropic reported in November 2025 that Chinese threat actors used its Claude model to launch widespread cyberattacks on companies and government agencies… Chinese actors jailbroke Anthropic’s coding tool, Claude Code, and used it to target 30 companies and government agencies around the world, marking the first known large-scale cyber campaign executed with minimal human involvement. This reported development is certainly unsettling, but far more alarming are future attacks that might go undetected….

__________________________

News from Reuters

OpenAI’s ban of Canada school shooting suspect’s account raises scrutiny of other online activity

OpenAI’s admission that it banned the ChatGPT account of mass shooting suspect Jesse Van Rootselaar months before the 18-year-old allegedly killed eight people and herself is drawing scrutiny to her past online activity and raising questions about whether opportunities were missed to prevent one of Canada’s worst mass killings.

OpenAI’s decision not to report Van Rootselaar to police prompted Canada’s Minister of Artificial Intelligence Evan Solomon to summon company officials to Ottawa this week and demand new safety measures from the company.

__________________________

News from Robert Reich

Pete Hegseth and the AI Doomsday Machine

Two forces are stopping sensible regulation of AI. He’s one of them.

February 25, 2026

Which is more important to you? Allowing Pete Hegseth to use artificial intelligence (AI) however he wants, OR preventing AI from doing mass surveillance of Americans and creating lethal weapons without human oversight?

That’s the stark choice posed by the intensifying fight between an AI corporation called Anthropic and Pete Hegseth, Trump’s Secretary of “War.”

AI is dangerous as hell. I view it as one of the four existential crises America now faces — along with climate change, widening inequality, and the destruction of our democracy.

To be sure, AI is capable of changing human life for the better. But…

__________________________

News from New Scientist

AIs can’t stop recommending nuclear strikes in war game simulations

Leading AIs from OpenAI, Anthropic and Google opted to use nuclear weapons in simulated war games in 95 per cent of cases

February 25, 2026

Advanced AI models appear willing to deploy nuclear weapons without the same reservations humans have when put into simulated geopolitical crises.

Kenneth Payne at King’s College London set three leading large language models – GPT-5.2, Claude Sonnet 4 and Gemini 3 Flash – against each other in simulated war games. The scenarios involved intense international standoffs, including border disputes, competition for scarce resources and existential threats to regime survival.

__________________________

News from C4ADS

Deceptive by Design

The AI-Enabled Tools Fueling the Scam Industry

February 25, 2026

Criminal networks running scam compounds across Southeast Asia are using AI-powered tools to dramatically scale their operations. An opaque ecosystem of transnational companies has embedded leading AI models into scammer workflows, driving cybercrime to new levels of sophistication….. Sophisticated AI chatbots likely help scammers deceive targets: KT integrated a GPT-4-powered roleplay chatbot that users can feed with specific industry knowledge, which may allow scammers of varying educational backgrounds to assume highly technical roles and develop convincing characters as they interact with targets.

__________________________

News from Axios

With investing, AI’s gain may be energy’s loss

February 25, 2026

AI may be siphoning off investment dollars that once flowed to energy startups, according to a new report from the International Energy Agency… The global shift is a stark, data-driven sign of the times — climate ambition is collapsing just as the AI race accelerates… Some of the world’s largest venture capital funds that back energy startups have redirected at least part of their capital toward AI… Across the 50 biggest funds investing in energy, specialization in energy innovation has slowed since 2022 — tracking with a rise in AI investments, the report finds.

__________________________

News from The American Prospect

AI Goes to Bat for Valerie Foushee

A super PAC funded by Anthropic, makers of Claude, is spending nearly $700,000 to get the North Carolina congresswoman, who sits on a key AI commission, re-elected.

February 25, 2026

Valerie Foushee (D-NC), she was getting massively outspent in her March 3 Democratic primary for re-election in the Fourth Congressional District, where she faces Durham County commissioner Nida Allam. But that million-dollar deficit has now been mostly made up by a super PAC linked to artificial intelligence model designer Anthropic, makers of Claude, which is now dumping nearly $700,000 into supporting Foushee….

__________________________

News fromThe Deep View

Pressure mounts on Anthropic’s AI ethics stance

February 25, 2026

Anthropic might be risking the thing that makes it Anthropic. On Tuesday, the company announced changes to its Responsible Scaling Policy, the framework that prevents Anthropic’s models from being released without proper safety and security measures.

The biggest change? The company has struck the pledge to hold back its models if Anthropic can’t guarantee proper risk mitigations in advance of release. Additionally, the company is now no longer preventing itself from training models above a certain level without certain safety measures.

__________________________

News from Stateline

Data center tax breaks are on the chopping block in some states

Rising energy costs and environmental concerns drive states to reconsider incentives.

February 24, 2026

After years of states pushing legislation to accelerate the development of data centers and the electric grid to support them, some legislators want to limit or repeal state and local incentives that paved their way.

President Donald Trump also has changed his tone. Last year he issued an executive order and other federal initiatives meant to support accelerated data center development. Then last month, he cited rising electricity bills in saying technology companies that build data centers must “pay their own way,” in a post on Truth Social.

__________________________

News from TechCrunch

Anthropic won’t budge as Pentagon escalates AI dispute

February 24, 2026

Anthropic has until Friday evening to either give the U.S. military unrestricted access to its AI model or face the consequences.… Defense Secretary Pete Hegseth told Anthropic CEO Dario Amodei in a meeting Tuesday morning that the Pentagon will either declare Anthropic a “supply chain risk” — a designation usually reserved for foreign adversaries — or invoke the Defense Production Act (DPA) to force the company to tailor a version of the model to the military’s needs.

__________________________

News from Truthout

More State Governments Are Turning Against Data Center Tax Breaks

As data center projects increasingly meet fierce popular resistance, local and state elected leaders are taking notice.

February 24, 2026

After years of states pushing legislation to accelerate the development of data centers and the electric grid to support them, some legislators want to limit or repeal state and local incentives that paved their way.

President Donald Trump also has changed his tone. Last year he issued an executive order and other federal initiatives meant to support accelerated data center development. Then last month, he cited rising electricity bills in saying technology companies that build data centers must “pay their own way,” in a post on Truth Social.

__________________________

News from Rest of World

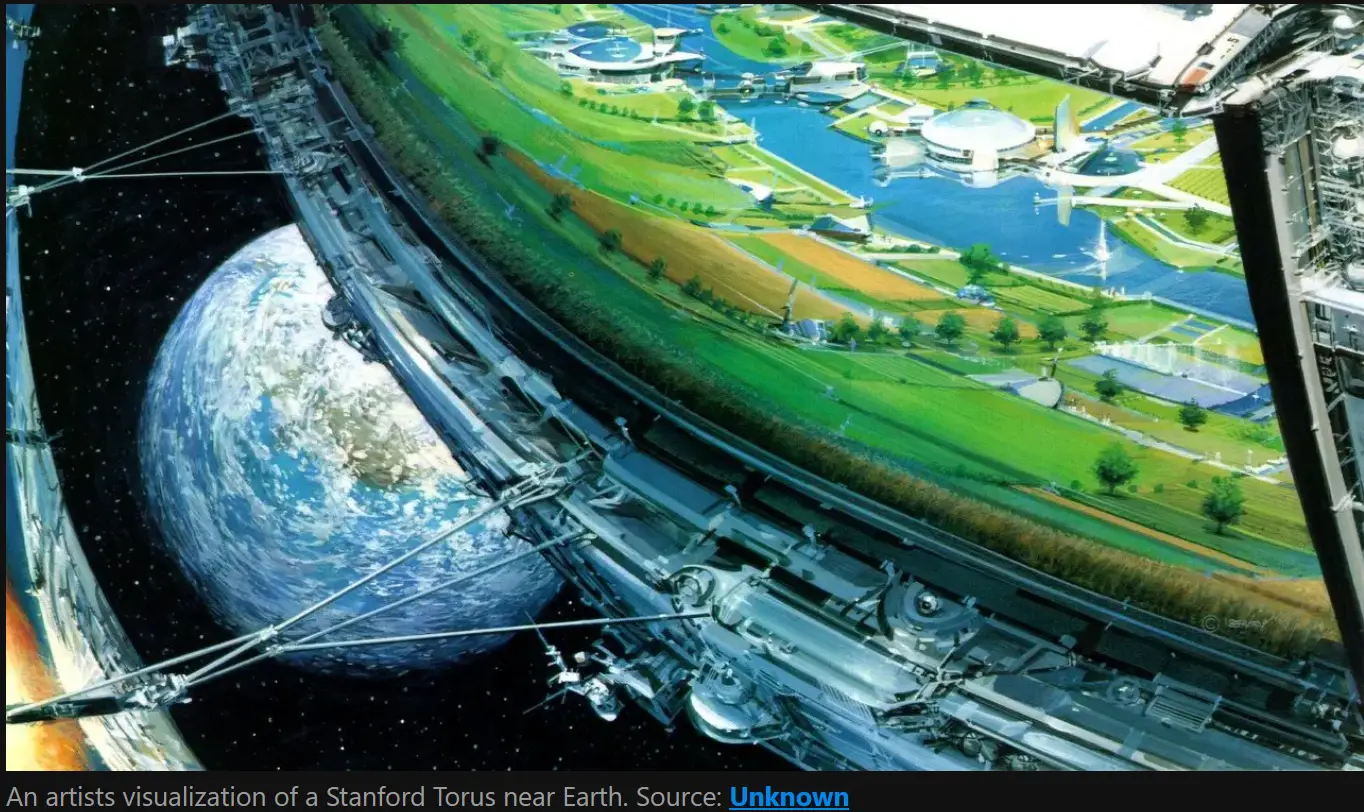

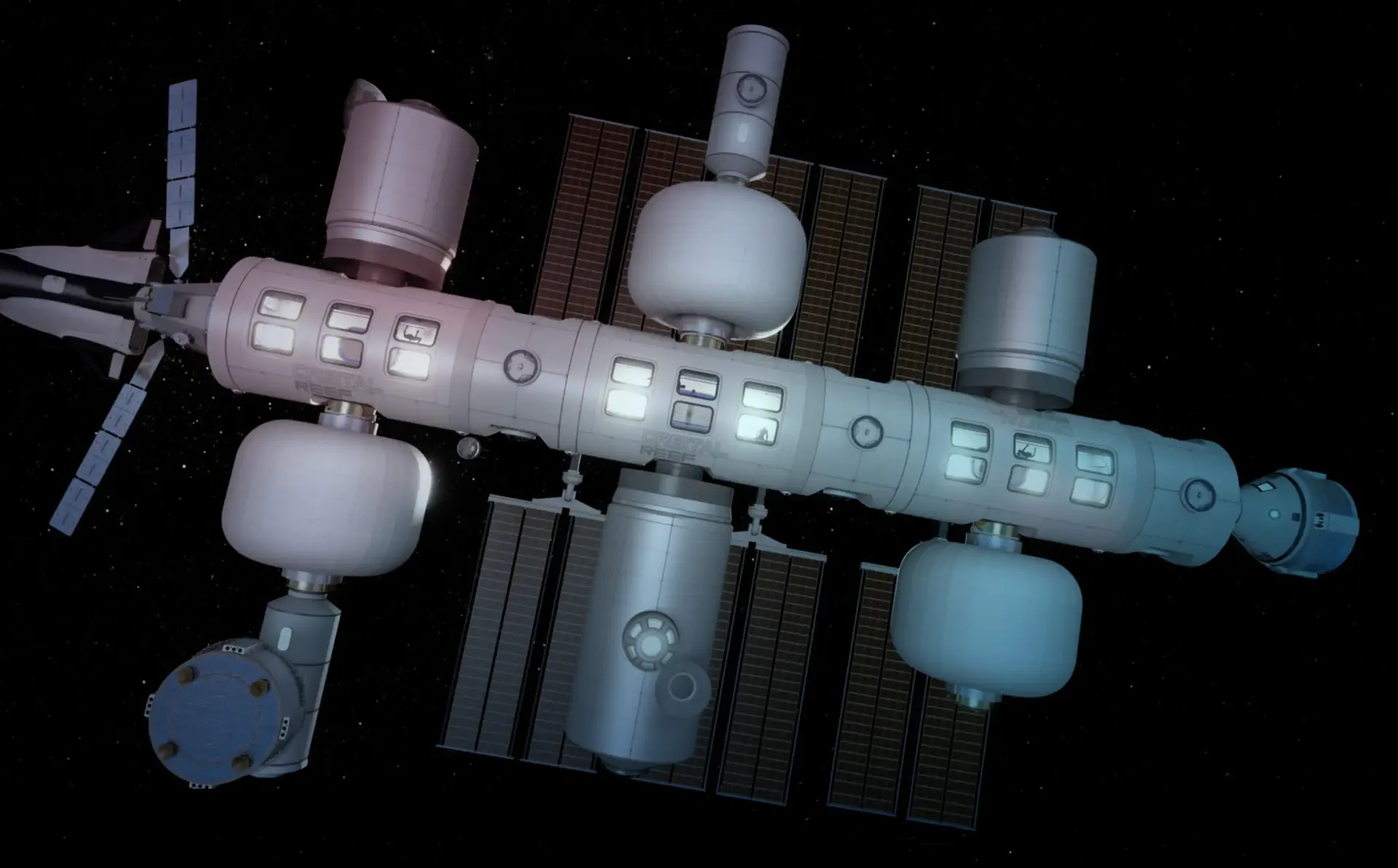

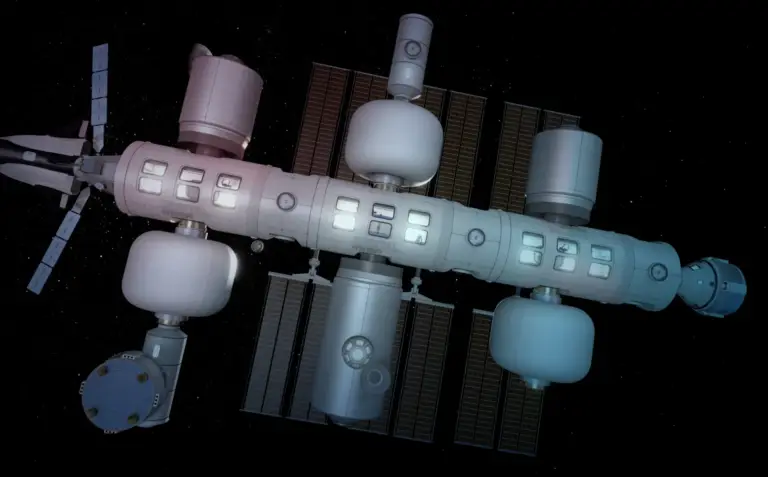

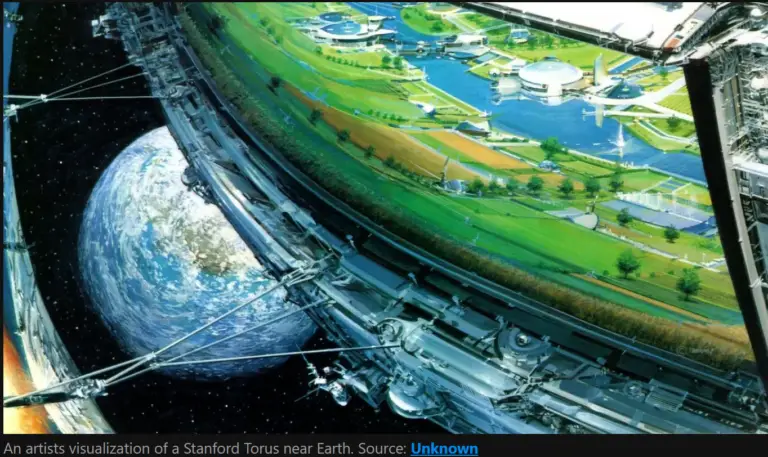

Data centers are racing to space — and regulation can’t keep up

Experts warn the move could shift critical infrastructure beyond national laws — deepening digital dependence for much of the developing world.

February 24, 2026

Over the past month, six American companies and a Chinese firm have expressed interest in building orbital data centers,… While experts see potential upsides, they warn the projects could open governance loopholes — especially for countries with little say in how they are run.

For many developing countries, where governments already struggle to assert data sovereignty, orbital data centers could place critical infrastructure beyond regulatory reach and deepen digital dependence.

__________________________

News from Time

Exclusive: Anthropic Drops Flagship Safety Pledge

February 24, 2026

Anthropic, the wildly successful AI company that has cast itself as the most safety-conscious of the top research labs, is dropping the central pledge of its flagship safety policy… In 2023, Anthropic committed to never train an AI system unless it could guarantee in advance that the company’s safety measures were adequate. For years, its leaders touted that promise—the central pillar of their Responsible Scaling Policy (RSP)—as evidence that they are a responsible company… Anthropic’s chief science officer Jared Kaplan told TIME in an exclusive interview. “We didn’t really feel, with the rapid advance of AI, that it made sense for us to make unilateral commitments … if competitors are blazing ahead.”

__________________________

News from Gizmodo

Meta Exec Learns the Hard Way That AI Can Just Delete Your Stuff

One small trick to get you to inbox zero.

February 23, 2026

Over the weekend, Summer Yue, the director of safety and alignment at Meta’s superintelligence lab, posted on Twitter that OpenClaw deleted her entire inbox despite her pleading messages to stop. OpenClaw (née Clawdbot and Moltbot) has become a popular open-source AI agent for AI evangelists despite the pretty obvious and troubling security vulnerabilities, and Yue wanted to give it a shot. … OpenClaw isn’t the only AI tool actively dragging conversations to the trash, either.

__________________________

News from Al Mauroni

The Continued Overreaction to AI and Biotech

This community loves to listen to its own doomsaying voice

February 23, 2026

Here we go again. There seems to be no end of articles warning as to the impending use of artificial intelligence by disgruntled loners or violent groups to create the next pandemic or a novel bioengineered weapon using equipment bought on Amazon in their garage. I’m generally a skeptic at this point in time, after reviewing many stories as to the inadequacy of AI-generated products. Sure, these AI tools can do some pretty wild deep fakes and videos of things that never happened….

__________________________

News from Axios

Learn to coach your chatbot

February 23, 2026

If you’re still using your chatbot like it’s Google, stop. Stop it right now.

Why it matters: Generative AI is fundamentally different — and far more useful — when you treat it like a collaborator and check its work.

Think of yourself like a coach and the AI like the best player on your team. Then run the play….Train the bot with the old adage: “Help me help you.”…. Researchers defined 24 specific behaviors they believe exemplify safe, effective human-AI collaboration.

__________________________

News from Lifehacker

AI Could Make Your Next TV More Expensive

February 23, 2026

The scarcity of RAM brought on by the artificial intelligence boom, dubbed RAMageddon, is affecting more than just the price of PCs. AI could make new televisions more expensive too—as well as—game consoles, cell phones, high-tech coffee makers, and anything else with memory and a processor. But if you’re in the market for a new TV, you might be better off buying sooner rather than later….

__________________________

News from Nature

AI is threatening science jobs. Which ones are most at risk?

February 20, 2026

Data-analysis and modelling positions are already becoming obsolete…. Seeking answers, Nature spoke to more than four dozen researchers across academia and industry who use AI in their work. Many of them say that AI’s ascendance is already reducing demand for human researchers who can write code or do basic data analysis…. Obsolescence of some basic roles in areas such as computer modelling “is not even in the future. It’s happening now”, says Xuanhe Zhao, a mechanical engineer at the Massachusetts Institute of Technology in Cambridge, because “AI is doing this much better than entry-level scientists”….

About our News Briefs editor, David Isenberg→